Technical AI Safety Puzzle #1

$2500+ in prizes. Most neural networks represent features linearly in their activations. Ours doesn’t. Can you interpret it?

The puzzle

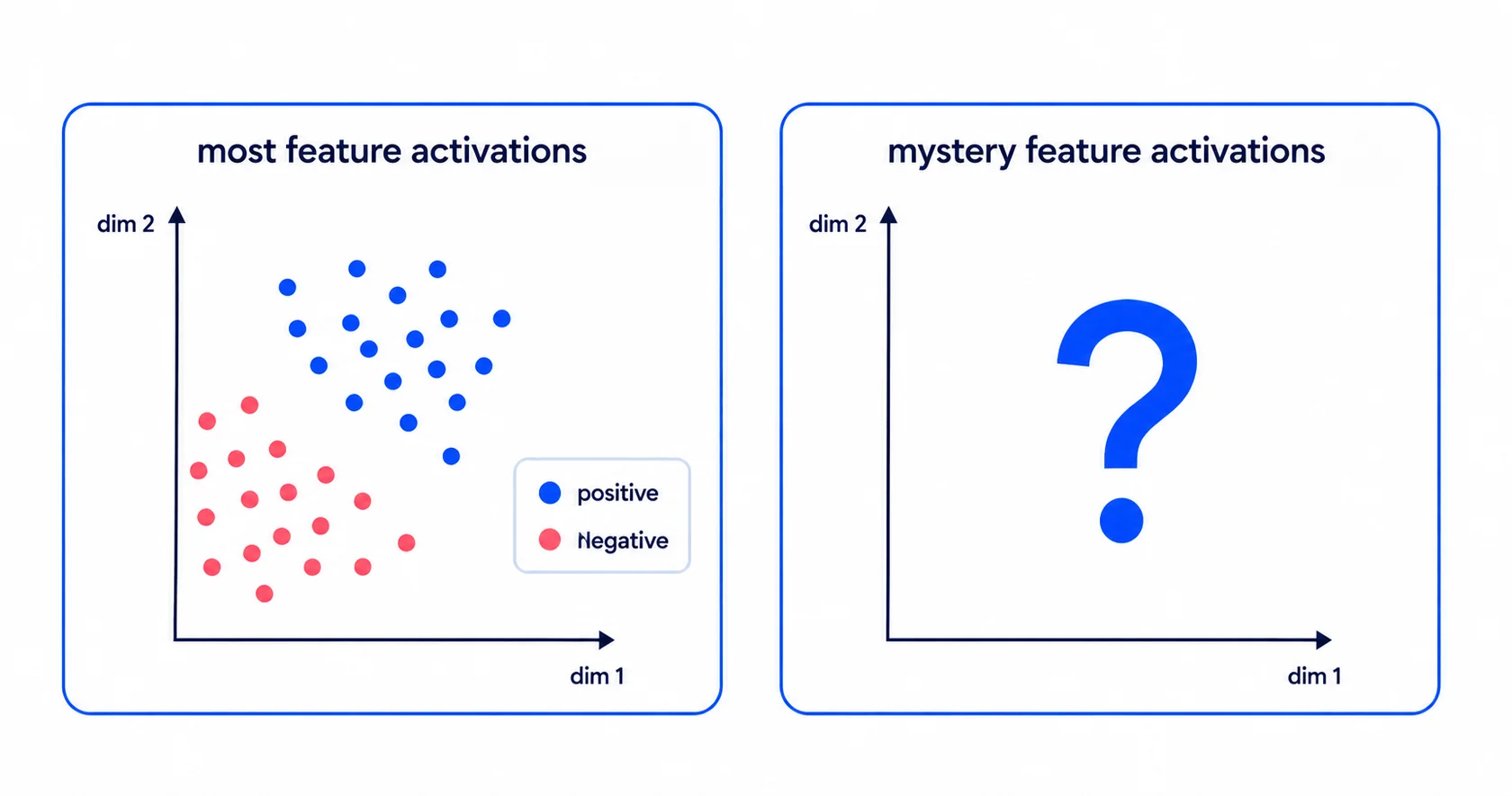

We trained a model on short text inputs to predict eight binary features simultaneously (e.g. contains a person’s name, mentions a food, phrased as a question). After a particular layer of this model, seven of these features are represented linearly, where a single direction in the activation space describes that feature. However, one feature is represented in a different way.

Your job is to figure out which feature it is and how it is represented.

Your three tasks

- 1.Find it. Identify which of the eight features is not represented linearly.

- 2.Explain how it is represented. Describe the geometric structure the model uses to represent it at layer L. Show the analysis you used to convince yourself.

- 3.Train a model with an even weirder representation. Train your own model that encodes it (or some other feature) in a more interesting way than ours. “More interesting” is up to you to define and defend.

Correct submissions as of 13th May

No correct submissions yet. Be the first.

What you get

$1,000

1st

$750

2nd

$500

3rd

$250 each

Honourable mentions

Any submissions which impress us will also receive shout outs on all our socials and we’ll keep an eye out for strong candidates for our courses, programs, and grants.

What you’ll submit

A single google doc, documenting what you tried, what worked, what didn’t, and what structure emerged in the trained model. Images encouraged. You will be judged on:

- Clarity of your explanations

- Strength of the evidence you generate for your answers

- Novelty in the model you train

Rules

- Please do not share answers publicly online until after 12th June.

- Use of LLMs for understanding the puzzle and for coding is encouraged.

- Please write your submission in your own words. We will be checking!

Deadline: 12th June